Tomer Ashuach

Researcher at Technion – Israel Institute of Technology

Technion – Israel Institute of Technology

I’m a PhD student at the Technion, advised by Prof Yonatan Belinkov. My research focuses on the interpretability of language models, with particular emphasis on uncovering their internal mechanisms and understanding how knowledge is acquired and can be unlearned.

Research Interests

- Interpretability in LLMs

- Knowledge and Unlearning in LLMs

- AI Safety and Alignment

Publications

2026

- ACL 2026

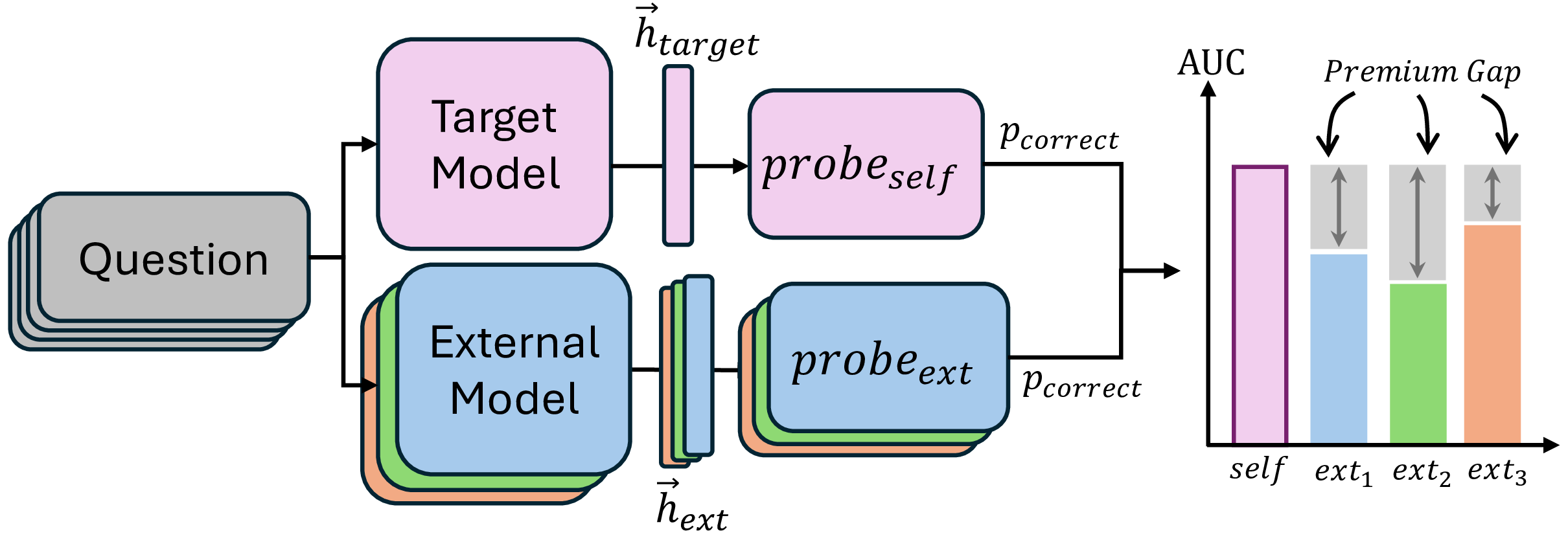

In Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics: ACL 2026, Dec 2026We explore whether large language models possess privileged internal knowledge about their own correctness, analogous to human introspection. By evaluating models on conflicting predictions, we discover that LLMs exhibit a domain-specific intuition for factual knowledge tasks that external observers cannot access.

In Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics: ACL 2026, Dec 2026We explore whether large language models possess privileged internal knowledge about their own correctness, analogous to human introspection. By evaluating models on conflicting predictions, we discover that LLMs exhibit a domain-specific intuition for factual knowledge tasks that external observers cannot access. - ACL 2026

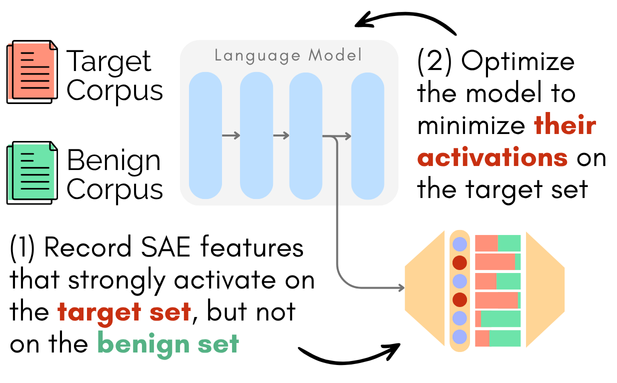

In Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics: ACL 2026, Jan 2026We introduce CRISP, a parameter-efficient method leveraging sparse autoencoders to achieve persistent concept unlearning in LLMs. By automatically identifying and suppressing salient features, CRISP successfully removes harmful knowledge while maintaining model utility.

In Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics: ACL 2026, Jan 2026We introduce CRISP, a parameter-efficient method leveraging sparse autoencoders to achieve persistent concept unlearning in LLMs. By automatically identifying and suppressing salient features, CRISP successfully removes harmful knowledge while maintaining model utility.

2025

- ACL Findings 2025

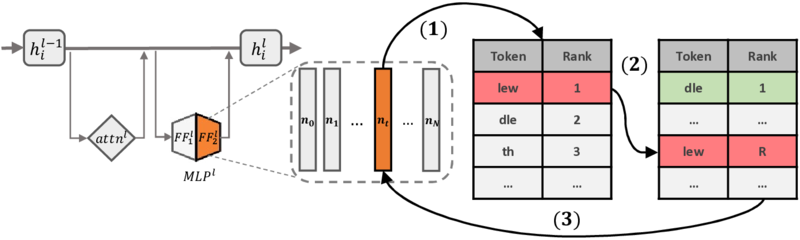

In Findings of the Association for Computational Linguistics: ACL 2025, May 2025We propose REVS, a non-gradient-based method that unlearns sensitive information from language models by identifying and modifying a small subset of relevant neurons. REVS achieves robust unlearning and resists extraction attacks while preserving the model’s underlying integrity.

In Findings of the Association for Computational Linguistics: ACL 2025, May 2025We propose REVS, a non-gradient-based method that unlearns sensitive information from language models by identifying and modifying a small subset of relevant neurons. REVS achieves robust unlearning and resists extraction attacks while preserving the model’s underlying integrity.